TensorFlow.org पर देखें TensorFlow.org पर देखें |  Google Colab में चलाएं Google Colab में चलाएं |  GitHub पर स्रोत देखें GitHub पर स्रोत देखें |  नोटबुक डाउनलोड करें नोटबुक डाउनलोड करें |

यह पाठ वर्गीकरण ट्यूटोरियल एक गाड़ियों आवर्तक तंत्रिका नेटवर्क पर IMDB बड़ी फिल्म समीक्षा डाटासेट भावना विश्लेषण के लिए।

सेट अप

import numpy as np

import tensorflow_datasets as tfds

import tensorflow as tf

tfds.disable_progress_bar()

आयात matplotlib और साजिश रेखांकन करने के लिए एक सहायक समारोह बनाने के लिए:

import matplotlib.pyplot as plt

def plot_graphs(history, metric):

plt.plot(history.history[metric])

plt.plot(history.history['val_'+metric], '')

plt.xlabel("Epochs")

plt.ylabel(metric)

plt.legend([metric, 'val_'+metric])

सेटअप इनपुट पाइपलाइन

IMDB बड़ी फिल्म समीक्षा डाटासेट एक द्विआधारी वर्गीकरण डाटासेट-सभी समीक्षाएं या तो एक सकारात्मक या नकारात्मक भावना है।

डाटासेट डाउनलोड का उपयोग कर TFDS । देखें लोड हो रहा है पाठ ट्यूटोरियल कैसे मैन्युअल रूप से डेटा की इस तरह लोड करने के लिए पर जानकारी के लिए।

dataset, info = tfds.load('imdb_reviews', with_info=True,

as_supervised=True)

train_dataset, test_dataset = dataset['train'], dataset['test']

train_dataset.element_spec

(TensorSpec(shape=(), dtype=tf.string, name=None), TensorSpec(shape=(), dtype=tf.int64, name=None))

प्रारंभ में यह (पाठ, लेबल जोड़े) का एक डेटासेट देता है:

for example, label in train_dataset.take(1):

print('text: ', example.numpy())

print('label: ', label.numpy())

text: b"This was an absolutely terrible movie. Don't be lured in by Christopher Walken or Michael Ironside. Both are great actors, but this must simply be their worst role in history. Even their great acting could not redeem this movie's ridiculous storyline. This movie is an early nineties US propaganda piece. The most pathetic scenes were those when the Columbian rebels were making their cases for revolutions. Maria Conchita Alonso appeared phony, and her pseudo-love affair with Walken was nothing but a pathetic emotional plug in a movie that was devoid of any real meaning. I am disappointed that there are movies like this, ruining actor's like Christopher Walken's good name. I could barely sit through it." label: 0

अगला प्रशिक्षण के लिए डेटा शफ़ल और इन के बैच बनाने (text, label) जोड़े:

BUFFER_SIZE = 10000

BATCH_SIZE = 64

train_dataset = train_dataset.shuffle(BUFFER_SIZE).batch(BATCH_SIZE).prefetch(tf.data.AUTOTUNE)

test_dataset = test_dataset.batch(BATCH_SIZE).prefetch(tf.data.AUTOTUNE)

for example, label in train_dataset.take(1):

print('texts: ', example.numpy()[:3])

print()

print('labels: ', label.numpy()[:3])

texts: [b'This is arguably the worst film I have ever seen, and I have quite an appetite for awful (and good) movies. It could (just) have managed a kind of adolescent humour if it had been consistently tongue-in-cheek --\xc3\xa0 la ROCKY HORROR PICTURE SHOW, which was really very funny. Other movies, like PLAN NINE FROM OUTER SPACE, manage to be funny while (apparently) trying to be serious. As to the acting, it looks like they rounded up brain-dead teenagers and asked them to ad-lib the whole production. Compared to them, Tom Cruise looks like Alec Guinness. There was one decent interpretation -- that of the older ghoul-busting broad on the motorcycle.' b"I saw this film in the worst possible circumstance. I'd already missed 15 minutes when I woke up to it on an international flight between Sydney and Seoul. I didn't know what I was watching, I thought maybe it was a movie of the week, but quickly became riveted by the performance of the lead actress playing a young woman who's child had been kidnapped. The premise started taking twist and turns I didn't see coming and by the end credits I was scrambling through the the in-flight guide to figure out what I had just watched. Turns out I was belatedly discovering Do-yeon Jeon who'd won Best Actress at Cannes for the role. I don't know if Secret Sunshine is typical of Korean cinema but I'm off to the DVD store to discover more." b"Hello. I am Paul Raddick, a.k.a. Panic Attack of WTAF, Channel 29 in Philadelphia. Let me tell you about this god awful movie that powered on Adam Sandler's film career but was digitized after a short time.<br /><br />Going Overboard is about an aspiring comedian played by Sandler who gets a job on a cruise ship and fails...or so I thought. Sandler encounters babes that like History of the World Part 1 and Rebound. The babes were supposed to be engaged, but, actually, they get executed by Sawtooth, the meanest cannibal the world has ever known. Adam Sandler fared bad in Going Overboard, but fared better in Big Daddy, Billy Madison, and Jen Leone's favorite, 50 First Dates. Man, Drew Barrymore was one hot chick. Spanglish is red hot, Going Overboard ain't Dooley squat! End of file."] labels: [0 1 0]

टेक्स्ट एन्कोडर बनाएं

कच्चे पाठ द्वारा लोड tfds इससे पहले कि यह एक मॉडल में इस्तेमाल किया जा सकता संसाधित किया जाना चाहिए। प्रशिक्षण के लिए प्रक्रिया पाठ करने के लिए सबसे आसान तरीका उपयोग कर रहा है TextVectorization परत। इस परत में कई क्षमताएं हैं, लेकिन यह ट्यूटोरियल डिफ़ॉल्ट व्यवहार से जुड़ा रहता है।

परत बनाएँ, और परत की करने के लिए डाटासेट के पाठ पारित .adapt विधि:

VOCAB_SIZE = 1000

encoder = tf.keras.layers.TextVectorization(

max_tokens=VOCAB_SIZE)

encoder.adapt(train_dataset.map(lambda text, label: text))

.adapt विधि परत की शब्दावली तय करता है। यहां पहले 20 टोकन हैं। पैडिंग और अज्ञात टोकन के बाद उन्हें आवृत्ति द्वारा क्रमबद्ध किया जाता है:

vocab = np.array(encoder.get_vocabulary())

vocab[:20]

array(['', '[UNK]', 'the', 'and', 'a', 'of', 'to', 'is', 'in', 'it', 'i',

'this', 'that', 'br', 'was', 'as', 'for', 'with', 'movie', 'but'],

dtype='<U14')

एक बार शब्दावली सेट हो जाने के बाद, परत टेक्स्ट को इंडेक्स में एन्कोड कर सकती है। सूचकांक के tensors बैच में सबसे लंबे समय तक अनुक्रम को 0-गद्देदार कर रहे हैं (जब तक आप एक निश्चित सेट output_sequence_length ):

encoded_example = encoder(example)[:3].numpy()

encoded_example

array([[ 11, 7, 1, ..., 0, 0, 0],

[ 10, 208, 11, ..., 0, 0, 0],

[ 1, 10, 237, ..., 0, 0, 0]])

डिफ़ॉल्ट सेटिंग्स के साथ, प्रक्रिया पूरी तरह से प्रतिवर्ती नहीं है। उसके तीन मुख्य कारण हैं:

- के लिए डिफ़ॉल्ट मान

preprocessing.TextVectorizationकेstandardizeतर्क है"lower_and_strip_punctuation"। - कुछ अज्ञात टोकन में सीमित शब्दावली आकार और चरित्र-आधारित फ़ॉलबैक की कमी का परिणाम है।

for n in range(3):

print("Original: ", example[n].numpy())

print("Round-trip: ", " ".join(vocab[encoded_example[n]]))

print()

Original: b'This is arguably the worst film I have ever seen, and I have quite an appetite for awful (and good) movies. It could (just) have managed a kind of adolescent humour if it had been consistently tongue-in-cheek --\xc3\xa0 la ROCKY HORROR PICTURE SHOW, which was really very funny. Other movies, like PLAN NINE FROM OUTER SPACE, manage to be funny while (apparently) trying to be serious. As to the acting, it looks like they rounded up brain-dead teenagers and asked them to ad-lib the whole production. Compared to them, Tom Cruise looks like Alec Guinness. There was one decent interpretation -- that of the older ghoul-busting broad on the motorcycle.' Round-trip: this is [UNK] the worst film i have ever seen and i have quite an [UNK] for awful and good movies it could just have [UNK] a kind of [UNK] [UNK] if it had been [UNK] [UNK] [UNK] la [UNK] horror picture show which was really very funny other movies like [UNK] [UNK] from [UNK] space [UNK] to be funny while apparently trying to be serious as to the acting it looks like they [UNK] up [UNK] [UNK] and [UNK] them to [UNK] the whole production [UNK] to them tom [UNK] looks like [UNK] [UNK] there was one decent [UNK] that of the older [UNK] [UNK] on the [UNK] Original: b"I saw this film in the worst possible circumstance. I'd already missed 15 minutes when I woke up to it on an international flight between Sydney and Seoul. I didn't know what I was watching, I thought maybe it was a movie of the week, but quickly became riveted by the performance of the lead actress playing a young woman who's child had been kidnapped. The premise started taking twist and turns I didn't see coming and by the end credits I was scrambling through the the in-flight guide to figure out what I had just watched. Turns out I was belatedly discovering Do-yeon Jeon who'd won Best Actress at Cannes for the role. I don't know if Secret Sunshine is typical of Korean cinema but I'm off to the DVD store to discover more." Round-trip: i saw this film in the worst possible [UNK] id already [UNK] [UNK] minutes when i [UNK] up to it on an [UNK] [UNK] between [UNK] and [UNK] i didnt know what i was watching i thought maybe it was a movie of the [UNK] but quickly became [UNK] by the performance of the lead actress playing a young woman whos child had been [UNK] the premise started taking twist and turns i didnt see coming and by the end credits i was [UNK] through the the [UNK] [UNK] to figure out what i had just watched turns out i was [UNK] [UNK] [UNK] [UNK] [UNK] [UNK] best actress at [UNK] for the role i dont know if secret [UNK] is typical of [UNK] cinema but im off to the dvd [UNK] to [UNK] more Original: b"Hello. I am Paul Raddick, a.k.a. Panic Attack of WTAF, Channel 29 in Philadelphia. Let me tell you about this god awful movie that powered on Adam Sandler's film career but was digitized after a short time.<br /><br />Going Overboard is about an aspiring comedian played by Sandler who gets a job on a cruise ship and fails...or so I thought. Sandler encounters babes that like History of the World Part 1 and Rebound. The babes were supposed to be engaged, but, actually, they get executed by Sawtooth, the meanest cannibal the world has ever known. Adam Sandler fared bad in Going Overboard, but fared better in Big Daddy, Billy Madison, and Jen Leone's favorite, 50 First Dates. Man, Drew Barrymore was one hot chick. Spanglish is red hot, Going Overboard ain't Dooley squat! End of file." Round-trip: [UNK] i am paul [UNK] [UNK] [UNK] [UNK] of [UNK] [UNK] [UNK] in [UNK] let me tell you about this god awful movie that [UNK] on [UNK] [UNK] film career but was [UNK] after a short [UNK] br going [UNK] is about an [UNK] [UNK] played by [UNK] who gets a job on a [UNK] [UNK] and [UNK] so i thought [UNK] [UNK] [UNK] that like history of the world part 1 and [UNK] the [UNK] were supposed to be [UNK] but actually they get [UNK] by [UNK] the [UNK] [UNK] the world has ever known [UNK] [UNK] [UNK] bad in going [UNK] but [UNK] better in big [UNK] [UNK] [UNK] and [UNK] [UNK] favorite [UNK] first [UNK] man [UNK] [UNK] was one hot [UNK] [UNK] is red hot going [UNK] [UNK] [UNK] [UNK] end of [UNK]

मॉडल बनाएं

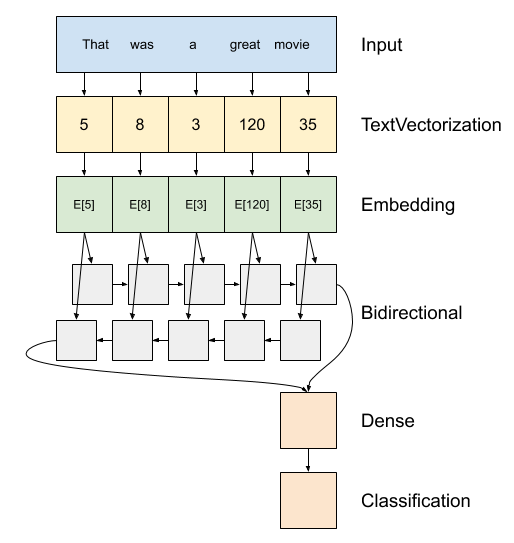

ऊपर मॉडल का एक आरेख है।

यह मॉडल एक के रूप में निर्माण किया जा सकता है

tf.keras.Sequential।पहली परत है

encoder, जो टोकन सूचकांक के एक दृश्य के लिए पाठ धर्मान्तरित।एन्कोडर के बाद एक एम्बेडिंग परत है। एक एम्बेडिंग परत प्रति शब्द एक वेक्टर संग्रहीत करती है। जब कहा जाता है, तो यह शब्द सूचकांकों के अनुक्रमों को वैक्टर के अनुक्रमों में बदल देता है। ये वैक्टर प्रशिक्षित हैं। प्रशिक्षण के बाद (पर्याप्त डेटा पर), समान अर्थ वाले शब्दों में अक्सर समान वैक्टर होते हैं।

यह सूचकांक-देखने में ज्यादा एक के माध्यम से एक एक गर्म एन्कोडेड वेक्टर गुजर के बराबर आपरेशन से अधिक कुशल है

tf.keras.layers.Denseपरत।एक आवर्तक तंत्रिका नेटवर्क (RNN) तत्वों के माध्यम से पुनरावृति करके अनुक्रम इनपुट को संसाधित करता है। आरएनएन एक टाइमस्टेप से आउटपुट को अगले टाइमस्टेप पर अपने इनपुट में पास करते हैं।

tf.keras.layers.Bidirectionalआवरण भी एक RNN परत के साथ इस्तेमाल किया जा सकता। यह RNN परत के माध्यम से इनपुट को आगे और पीछे प्रचारित करता है और फिर अंतिम आउटपुट को जोड़ता है।एक द्विदिश आरएनएन का मुख्य लाभ यह है कि इनपुट की शुरुआत से सिग्नल को आउटपुट को प्रभावित करने के लिए हर टाइमस्टेप के माध्यम से सभी तरह से संसाधित करने की आवश्यकता नहीं होती है।

द्विदिश आरएनएन का मुख्य नुकसान यह है कि आप भविष्यवाणियों को कुशलता से स्ट्रीम नहीं कर सकते क्योंकि शब्दों को अंत में जोड़ा जा रहा है।

बाद RNN एक भी वेक्टर के अनुक्रम में बदल दिया है दो

layers.Denseवर्गीकरण आउटपुट के रूप में एक भी logit को यह वेक्टर प्रतिनिधित्व से कुछ अंतिम प्रसंस्करण, और परिवर्तित करते हैं।

इसे लागू करने के लिए कोड नीचे है:

model = tf.keras.Sequential([

encoder,

tf.keras.layers.Embedding(

input_dim=len(encoder.get_vocabulary()),

output_dim=64,

# Use masking to handle the variable sequence lengths

mask_zero=True),

tf.keras.layers.Bidirectional(tf.keras.layers.LSTM(64)),

tf.keras.layers.Dense(64, activation='relu'),

tf.keras.layers.Dense(1)

])

कृपया ध्यान दें कि केरस अनुक्रमिक मॉडल का उपयोग यहां किया जाता है क्योंकि मॉडल की सभी परतों में केवल एक इनपुट होता है और एकल आउटपुट का उत्पादन होता है। यदि आप स्टेटफुल आरएनएन लेयर का उपयोग करना चाहते हैं, तो आप अपने मॉडल को केरस फंक्शनल एपीआई या मॉडल सबक्लासिंग के साथ बनाना चाह सकते हैं ताकि आप आरएनएन लेयर स्टेट्स को पुनः प्राप्त और पुन: उपयोग कर सकें। जांच करें Keras RNN गाइड अधिक जानकारी के लिए।

Embedding परत मास्किंग का उपयोग करता है अलग अनुक्रम लंबाई को संभालने के लिए। के बाद सभी परतों Embedding समर्थन मास्किंग:

print([layer.supports_masking for layer in model.layers])

[False, True, True, True, True]

यह पुष्टि करने के लिए कि यह अपेक्षा के अनुरूप काम करता है, एक वाक्य का दो बार मूल्यांकन करें। सबसे पहले, अकेले तो मास्क के लिए कोई पैडिंग नहीं है:

# predict on a sample text without padding.

sample_text = ('The movie was cool. The animation and the graphics '

'were out of this world. I would recommend this movie.')

predictions = model.predict(np.array([sample_text]))

print(predictions[0])

[-0.00012211]

अब, लंबे वाक्य वाले बैच में इसका पुन: मूल्यांकन करें। परिणाम समान होना चाहिए:

# predict on a sample text with padding

padding = "the " * 2000

predictions = model.predict(np.array([sample_text, padding]))

print(predictions[0])

[-0.00012211]

प्रशिक्षण प्रक्रिया को कॉन्फ़िगर करने के लिए केरस मॉडल संकलित करें:

model.compile(loss=tf.keras.losses.BinaryCrossentropy(from_logits=True),

optimizer=tf.keras.optimizers.Adam(1e-4),

metrics=['accuracy'])

मॉडल को प्रशिक्षित करें

history = model.fit(train_dataset, epochs=10,

validation_data=test_dataset,

validation_steps=30)

Epoch 1/10 391/391 [==============================] - 39s 84ms/step - loss: 0.6454 - accuracy: 0.5630 - val_loss: 0.4888 - val_accuracy: 0.7568 Epoch 2/10 391/391 [==============================] - 30s 75ms/step - loss: 0.3925 - accuracy: 0.8200 - val_loss: 0.3663 - val_accuracy: 0.8464 Epoch 3/10 391/391 [==============================] - 30s 75ms/step - loss: 0.3319 - accuracy: 0.8525 - val_loss: 0.3402 - val_accuracy: 0.8385 Epoch 4/10 391/391 [==============================] - 30s 75ms/step - loss: 0.3183 - accuracy: 0.8616 - val_loss: 0.3289 - val_accuracy: 0.8438 Epoch 5/10 391/391 [==============================] - 30s 75ms/step - loss: 0.3088 - accuracy: 0.8656 - val_loss: 0.3254 - val_accuracy: 0.8646 Epoch 6/10 391/391 [==============================] - 32s 81ms/step - loss: 0.3043 - accuracy: 0.8686 - val_loss: 0.3242 - val_accuracy: 0.8521 Epoch 7/10 391/391 [==============================] - 30s 76ms/step - loss: 0.3019 - accuracy: 0.8696 - val_loss: 0.3315 - val_accuracy: 0.8609 Epoch 8/10 391/391 [==============================] - 32s 76ms/step - loss: 0.3007 - accuracy: 0.8688 - val_loss: 0.3245 - val_accuracy: 0.8609 Epoch 9/10 391/391 [==============================] - 31s 77ms/step - loss: 0.2981 - accuracy: 0.8707 - val_loss: 0.3294 - val_accuracy: 0.8599 Epoch 10/10 391/391 [==============================] - 31s 78ms/step - loss: 0.2969 - accuracy: 0.8742 - val_loss: 0.3218 - val_accuracy: 0.8547

test_loss, test_acc = model.evaluate(test_dataset)

print('Test Loss:', test_loss)

print('Test Accuracy:', test_acc)

391/391 [==============================] - 15s 38ms/step - loss: 0.3185 - accuracy: 0.8582 Test Loss: 0.3184521794319153 Test Accuracy: 0.8581600189208984

plt.figure(figsize=(16, 8))

plt.subplot(1, 2, 1)

plot_graphs(history, 'accuracy')

plt.ylim(None, 1)

plt.subplot(1, 2, 2)

plot_graphs(history, 'loss')

plt.ylim(0, None)

(0.0, 0.6627909764647484)

एक नए वाक्य पर भविष्यवाणी चलाएँ:

यदि भविष्यवाणी> = 0.0 है, तो यह सकारात्मक है अन्यथा यह नकारात्मक है।

sample_text = ('The movie was cool. The animation and the graphics '

'were out of this world. I would recommend this movie.')

predictions = model.predict(np.array([sample_text]))

दो या अधिक LSTM परतों को ढेर करें

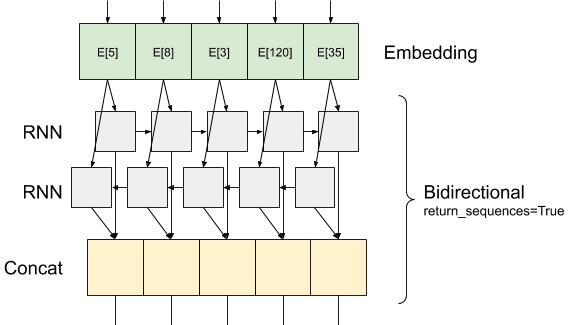

Keras आवर्तक परतों दो उपलब्ध मोड कि द्वारा नियंत्रित कर रहे है return_sequences निर्माता तर्क:

तो

Falseयह प्रत्येक इनपुट अनुक्रम के लिए केवल पिछले उत्पादन देता है (आकार (batch_size की एक 2 डी टेन्सर, output_features))। यह डिफ़ॉल्ट है, जिसका उपयोग पिछले मॉडल में किया गया था।अगर

Trueप्रत्येक timestep के लिए लगातार आउटपुट का पूरा दृश्यों दिया जाता है (आकार का एक 3 डी टेन्सर(batch_size, timesteps, output_features))।

यहाँ है क्या साथ इस तरह की जानकारी दिखता के प्रवाह return_sequences=True :

एक प्रयोग के बारे में दिलचस्प बात यह है RNN साथ return_sequences=True उत्पादन अभी भी 3-कुल्हाड़ियों है, इनपुट की तरह है, तो यह एक और RNN परत को पास किया जा सकता है, इस तरह है:

model = tf.keras.Sequential([

encoder,

tf.keras.layers.Embedding(len(encoder.get_vocabulary()), 64, mask_zero=True),

tf.keras.layers.Bidirectional(tf.keras.layers.LSTM(64, return_sequences=True)),

tf.keras.layers.Bidirectional(tf.keras.layers.LSTM(32)),

tf.keras.layers.Dense(64, activation='relu'),

tf.keras.layers.Dropout(0.5),

tf.keras.layers.Dense(1)

])

model.compile(loss=tf.keras.losses.BinaryCrossentropy(from_logits=True),

optimizer=tf.keras.optimizers.Adam(1e-4),

metrics=['accuracy'])

history = model.fit(train_dataset, epochs=10,

validation_data=test_dataset,

validation_steps=30)

Epoch 1/10 391/391 [==============================] - 71s 149ms/step - loss: 0.6502 - accuracy: 0.5625 - val_loss: 0.4923 - val_accuracy: 0.7573 Epoch 2/10 391/391 [==============================] - 55s 138ms/step - loss: 0.4067 - accuracy: 0.8198 - val_loss: 0.3727 - val_accuracy: 0.8271 Epoch 3/10 391/391 [==============================] - 54s 136ms/step - loss: 0.3417 - accuracy: 0.8543 - val_loss: 0.3343 - val_accuracy: 0.8510 Epoch 4/10 391/391 [==============================] - 53s 134ms/step - loss: 0.3242 - accuracy: 0.8607 - val_loss: 0.3268 - val_accuracy: 0.8568 Epoch 5/10 391/391 [==============================] - 53s 135ms/step - loss: 0.3174 - accuracy: 0.8652 - val_loss: 0.3213 - val_accuracy: 0.8516 Epoch 6/10 391/391 [==============================] - 52s 132ms/step - loss: 0.3098 - accuracy: 0.8671 - val_loss: 0.3294 - val_accuracy: 0.8547 Epoch 7/10 391/391 [==============================] - 53s 134ms/step - loss: 0.3063 - accuracy: 0.8697 - val_loss: 0.3158 - val_accuracy: 0.8594 Epoch 8/10 391/391 [==============================] - 52s 132ms/step - loss: 0.3043 - accuracy: 0.8692 - val_loss: 0.3184 - val_accuracy: 0.8521 Epoch 9/10 391/391 [==============================] - 53s 133ms/step - loss: 0.3016 - accuracy: 0.8704 - val_loss: 0.3208 - val_accuracy: 0.8609 Epoch 10/10 391/391 [==============================] - 54s 136ms/step - loss: 0.2975 - accuracy: 0.8740 - val_loss: 0.3301 - val_accuracy: 0.8651

test_loss, test_acc = model.evaluate(test_dataset)

print('Test Loss:', test_loss)

print('Test Accuracy:', test_acc)

391/391 [==============================] - 26s 65ms/step - loss: 0.3293 - accuracy: 0.8646 Test Loss: 0.329334557056427 Test Accuracy: 0.8646399974822998

# predict on a sample text without padding.

sample_text = ('The movie was not good. The animation and the graphics '

'were terrible. I would not recommend this movie.')

predictions = model.predict(np.array([sample_text]))

print(predictions)

[[-1.6796288]]

plt.figure(figsize=(16, 6))

plt.subplot(1, 2, 1)

plot_graphs(history, 'accuracy')

plt.subplot(1, 2, 2)

plot_graphs(history, 'loss')

जैसे अन्य मौजूदा आवर्तक परतों की जाँच करें GRU परतों ।

आप कस्टम RNNs के निर्माण में interestied कर रहे हैं, को देखने के Keras RNN गाइड ।